After 20 Years of Spaced Repetition, I Built a Scheduler That Trains a Neural Network on Your Phone

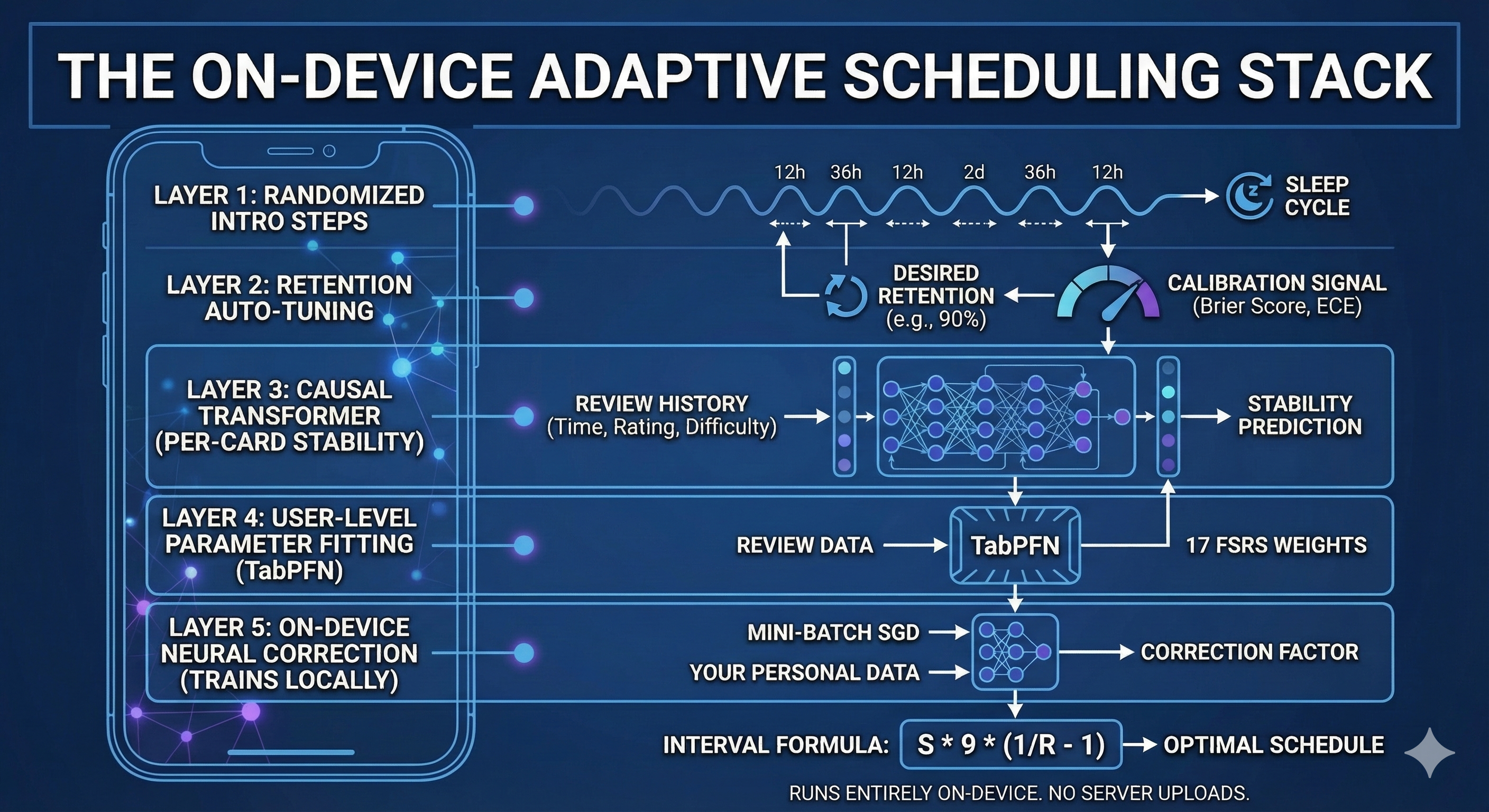

After 20 years of daily spaced repetition, I built a 5-layer adaptive scheduling stack that runs entirely on your phone — combining a causal transformer, automated retention tuning, and a neural correction network that trains on your personal review data to capture patterns that FSRS's fixed formula can't. Every model runs on-device via CoreML and pure Swift, so nothing ever leaves your phone. It's live in Anchrs for iOS with Anki import support.

I've been using spaced repetition for about 20 years. I started with languages — vocabulary drills that

eventually became conversational fluency — and later used it to prep for software engineering interviews

and retain technical knowledge across jobs. I've lived through every era of SRS: Mnemosyne, SM-2 Anki,

and now FSRS. Each generation was a genuine step forward.

But after two decades of daily reviews, you start to notice things the algorithm doesn't. Cards that

should be easy keep coming back too early. Cards you know are about to lapse get scheduled too late.

Your retention shifts when life gets busy, or when you're sleeping poorly, or when you switch between

studying dense technical material and casual vocabulary — and the system takes weeks to catch up.

FSRS was a huge improvement. Fitting 17 parameters to your personal review history instead of using

fixed intervals is objectively better than SM-2. But after living with it for a while, I started to

suspect the formula itself was the bottleneck. Not the parameter values — the shape of the math.

There are patterns in how we forget that a single closed-form equation can't capture, no matter how

well you tune its 17 weights.

So I did what any engineer who's been annoyed for long enough eventually does. I built something.

What I built

A 5-layer adaptive scheduling stack that runs entirely on-device. Each layer handles something the

layer below it can't.

Layer 1: Randomized intro steps

New cards in most SRS apps get a fixed first interval — usually "1 day." This creates two problems:

if you study 50 new cards today, all 50 come back tomorrow, creating a review spike that makes your

next session miserable. And a fixed 24-hour interval doesn't force overnight memory consolidation

for cards you studied in the morning.

The fix is simple: randomize the first interval within a range (12-36 hours for step 1, 1-3 days

for step 2). This spreads review load across days and guarantees at least one sleep cycle before

the model takes over scheduling. A small change with a surprisingly large effect on how your daily

review count feels.

Layer 2: Retention auto-tuning

Most SRS apps let you set a "desired retention" target — typically 90%. But this is a static number.

How do you know 90% is right for you, right now?

The system continuously tracks a calibration signal. Not just "are you getting cards right?" — that

metric is misleading because it's confounded by the intervals the scheduler itself chose. (If I

schedule everything at 1-day intervals, you'll get 99% of cards right. That doesn't mean the

scheduler is good. It means it's wasting your time.)

Instead, the system measures whether its probability predictions are accurate: when it predicts a

90% chance of recall, does that actually happen ~90% of the time? This is called calibration, and it's

measured via Brier score, Expected Calibration Error (ECE), and log-loss — standard ML metrics, but

rarely applied to spaced repetition.

The auto-tuner uses this calibration data as a feedback loop. If you're consistently beating the

scheduler's predictions (actual retention > predicted retention), it stretches your intervals by

lowering the target. If you're struggling, it tightens them. A negative feedback loop that converges

on your actual optimal workload — no manual slider adjustment required.

Layer 3: A causal transformer for per-card stability prediction

This is where it diverges from FSRS fundamentally.

FSRS models memory with a specific mathematical formula. After a successful recall, stability

updates as:

S' = S * (e^w8 * (11 - D) * S^(-w9) * (e^(w10*(1-R)) - 1) * modifier + 1)

This formula has 17 tunable weights, but its shape is fixed. It assumes stability growth follows

a specific functional form involving exponentials of difficulty and retrievability. If the true

relationship between your review history and your memory stability doesn't match this shape, no

amount of weight-tuning will fix it.

We trained a causal transformer — similar in architecture to a small language model — that reads the

raw review history of each card (up to 64 reviews: elapsed time, rating, stability snapshot,

difficulty) and outputs a stability prediction directly. No formula constraint. The model learns

whatever mapping from review sequences to stability best fits the training data.

This lets it capture patterns that FSRS's functional form literally cannot express: interactions

between time-of-day and retention, card-specific difficulty trajectories that don't follow the

mean-reversion formula, and nonlinear effects of review spacing patterns.

The model ships as a CoreML bundle and runs as a single forward pass on-device. No network calls.

Layer 4: User-level parameter fitting (TabPFN)

This layer does the same thing as FSRS's optimizer — fitting the 17 scheduling weights to your

personal review history — but via a different mechanism.

FSRS uses gradient descent (or coordinate descent in some implementations): start with default

weights, compute a loss function over your review history, adjust weights in the direction that

reduces loss, repeat.

We use a TabPFN (Tabular Prior-Fitted Network). This is a neural network pre-trained on millions

of synthetic parameter-fitting problems, so that at inference time it can predict the optimal

parameters in a single forward pass. No iterative optimization, no learning rate tuning, no

convergence issues. You feed it your review history; it outputs 17 optimized weights.

If the CoreML model isn't available, the system falls back to a multi-start coordinate descent

optimizer with adaptive step sizes — coarse steps that halve each pass, with random restarts

to escape local minima. Both paths produce the same kind of output: personalized FSRS weights

for the standard interval formula.

Layer 5: An on-device neural correction network

This is the layer I'm most excited about, because it's the one that makes the system get better

the longer you use it.

Layers 3 and 4 are pre-trained models. They're good — much better than defaults — but they were

trained on population-level data. They don't know you.

Layer 5 is a small neural network (a 5-input, 16-hidden, 1-output MLP) that trains on your

phone, using only your review data. It learns a multiplicative correction factor that adjusts the

stability predictions from Layers 3 or 4:

corrected_S = base_S * exp(MLP(features))

The five input features are:

- Base stability (log-scaled)

- Elapsed days since last review (log-scaled)

- Normalized user rating (0 = Again, 1 = Easy)

- Card difficulty (log-scaled)

- Number of prior reviews (log-scaled)

The training uses the same loss function as the offline model training: binary cross-entropy

computed through the full FSRS power-law retrievability formula. The gradient flows backward

through:

loss = -[y*log(R) + (1-y)*log(1-R)]

where R = (1 + delta_t / (9 * corrected_S))^(-1)

This means the network isn't just learning generic corrections — it's optimizing predictions of

your actual recall probability, measured against what you actually remembered and forgot.

The training loop uses mini-batch SGD with momentum (0.9), L2 regularization toward the identity

correction (so untrained weights produce factor = 1.0, changing nothing), and gradient clipping to

prevent instability. It retrains automatically when enough new reviews accumulate, and persists its

learned weights across app sessions.

How all five layers work together

Every layer produces a stability value. The interval formula is always the same — the one FSRS

uses:

interval = S * 9 * (1/R_desired - 1)

The layers just disagree about what S should be, and the system picks the best available signal:

- Is the card still in intro/relearning steps? Use the randomized step intervals (Layer 1).

- Is the card graduated? Compute desired retention from calibration data (Layer 2).

- Can the causal transformer predict stability for this card? Use it (Layer 3).

- Otherwise, use personalized FSRS parameters from TabPFN/MLE (Layer 4).

- If the on-device personalizer has been trained, apply its correction to either Layer 3 or 4's output (Layer 5).

Layer 2 affects the R_desired in the formula. Layer 5 adjusts the S. They compose naturally.

How I know it works

This is the part most SRS apps skip. It's easy to claim "our algorithm is better." It's hard to

measure it rigorously.

I track three calibration metrics in real-time:

Brier Score — the mean squared error between predicted recall probability and the actual

binary outcome (recalled or not). Range is 0 to 1; lower is better. A perfectly calibrated

scheduler that predicts 90% and you recall 90% of the time scores near 0.

Expected Calibration Error (ECE) — divides predictions into probability bins (0-10%, 10-20%,

etc.) and measures how far off the average prediction is from the actual recall rate within each

bin. Catches systematic over- or under-confidence at specific probability ranges.

Log-Loss — binary cross-entropy, the standard ML evaluation metric. More sensitive to

confident wrong predictions than Brier score.

These metrics answer the question: "When the scheduler says 85% chance of recall, does the user

actually recall ~85% of the time?" If yes, the scheduler is calibrated — and a calibrated

scheduler is one that gives you optimal intervals by definition, because the interval formula

assumes calibrated probability estimates.

Why on-device

Twenty years of spaced repetition data is an intimate map of what you know and don't know. The

review history for my language decks reveals what words I struggle with, what grammar patterns

haven't solidified, when in the day my memory is sharpest. I would not be comfortable uploading

that to a server.

The entire stack runs locally:

- CoreML models for the transformer and TabPFN (inference only, no network calls)

- Pure Swift mini-batch SGD for the on-device personalizer (trains locally, ~20ms for 500 examples)

- Calibration tracking in-memory with periodic persistence

- All weights stored in UserDefaults on your device

Nothing leaves your phone. This isn't a privacy policy promise — it's an architectural constraint.

There's no server endpoint to send data to even if we wanted to.

What this isn't

I'm not claiming to have "solved" spaced repetition. After 20 years I've learned that memory is

messier and more individual than any model captures. Context, sleep, stress, motivation — these all

affect retention in ways no scheduler handles well yet.

What I am claiming is narrower: there is meaningful signal in your review history that a

17-parameter closed-form formula leaves on the table, and neural models — especially ones that

train on your personal data, on your device — can capture some of it.

The relationship to FSRS

I want to be clear about this because I have deep respect for the FSRS project and Jarrett Ye's

work. FSRS was a step-function improvement for the entire SRS ecosystem. Our system doesn't

replace it — it builds on it.

Layer 4 is FSRS parameter optimization, just via a different mechanism. The interval formula is

the same. The 17-parameter space is the same. What we add is what happens above and below that

layer: neural models that aren't constrained by the formula (above) and an on-device learning loop

that personalizes beyond what population-level training captures (below).

If FSRS were to adopt per-card transformer predictions or on-device fine-tuning tomorrow, I'd be

thrilled. The point is the approach, not ownership of the idea.

Try it

This is live in Anchrs for iOS/macOS. It supports Anki import (.apkg) if you

want to bring over existing decks, and it runs on iPhone, Mac, and Apple Watch.

I'd genuinely love feedback from people who've been using SRS long enough to have strong opinions.

If you've been doing daily reviews for years, you have intuitions about scheduling that no

benchmark captures — and those intuitions are exactly what I want to test this against.